By Jenny Garrett OBE | Leadership Expert, Executive Coach, MIT AI Strategy Alumna | April 2025

Based on the Conscious Leaders, Conscious AI fireside chat with Albana Vrioni, Executive Director, Women on Boards Albania

Reading time: approximately 12 minutes | Includes video clips from the full conversation

Most conversations about AI leadership start in the wrong place. They start with tools, platforms, and risk checklists. They ask whether leaders have the right AI courses on their CV. They treat this as a technical problem waiting for a technical solution.

It is not.

I was recently in conversation with Albana Vrioni, Executive Director of Women on Boards Albania, as part of her Conscious Leaders, Conscious AI series. Albana opened with a set of statistics from governance surveys by PwC, EY, NACD and Diligent that stopped me in my tracks.

55% of directors privately believe a peer colleague should be replaced. 85% believe that speaking out on sensitive issues is more dangerous than staying silent. Boards say publicly they are AI-ready. Privately, they are wrestling with something harder to name.

That gap – between the public position and the private reality – is where this conversation lived for 45 minutes. And it is where the real work of AI-ready leadership begins.

This article draws directly from that conversation. It is structured around the questions Albana asked, and the answers I gave. I have also flagged where you can watch the relevant clip from the full session, so you can hear the exchanges in full.

What Leaders Are Really Saying About AI – The Private Version

When I am in rooms with senior leaders – boards, executive teams, Chief People Officers – I hear two modes. The first is urgency bordering on panic. The second is paralysis.

They are terrified of being left behind. But they are equally terrified of getting it publicly wrong. So they lurch between making rushed decisions they later have to reverse, and doing nothing at all while calling it strategic caution.

The public narrative is: we have an AI strategy. The private reality is usually: we have a working group and a pilot. They have not thought it through. They have not put the resources, expertise or governance in place. They are performing readiness they do not yet have.

This gap is well documented. Governance surveys by PwC, EY, NACD and Diligent find that 55% of directors privately believe a peer colleague should be replaced, and 85% believe that speaking out on sensitive issues is more dangerous than staying silent.

Source: PwC Annual Corporate Directors Survey 2024 — https://www.pwc.com/us/en/services/governance-insights-center/annual-corporate-directors-survey.html

Source: NACD Board Practices and Oversight Survey — https://www.nacdonline.org/insights/publications.cfm?ItemNumber=67009

What is the gap between what boards say publicly about AI and what they are privately grappling with?

Most boards publicly claim to have an AI strategy. Privately, they have a pilot and a working group. The real questions being asked behind closed doors are: who is accountable when an AI decision goes wrong? How do we make governance decisions about systems we do not fully understand? And how do we tell our workforce the truth about what is coming?

One example that illustrates this well. I was recently with a peer group of senior practitioners. Someone described a Chief Information Officer at a mid-to-large company – a person with full organisational responsibility for technology – who was quietly using an external AI tool on the side. The company had built its own internal model and said that was what everyone should use. The CIO knew the limitations. So they went outside the rules to get their job done.

If the CIO is doing it, what is everyone else doing? And does the board know?

This is the shadow AI problem. It is not a junior employee problem. It is a leadership and governance problem. And almost nobody is measuring it.

The trust gap is not just forming between leadership and technology. It is forming between organisations and the people they serve – because no one is clearly taking responsibility when things go wrong.

The Microsoft Copilot example makes this concrete. Look closely at the terms and conditions and you will find it described as an entertainment product. If it is an entertainment product, it should not be informing employment decisions, performance reviews, or strategic recommendations. But it is embedded in workplace tools across thousands of organisations. When something goes wrong – and it will – who is accountable? The C-suite? The middle manager who implemented it? The tool itself? The vendors have made their position clear. They do not want the liability.

This accountability vacuum is one of the defining governance challenges of our moment. And most boards have not yet named it.

What AI-Ready Leadership Actually Means – And Why Literacy Is Not Enough

There is a version of the AI-ready leadership conversation that focuses entirely on skills. Tools. Prompts. Use cases. Certifications. It is useful. It is also not sufficient.

The deeper leadership challenge is something different: judgement under uncertainty at speed. Making consequential decisions about systems that are moving faster than our governance frameworks can follow. Knowing when to act and when to pause. Understanding what you are choosing not to do, and why.

The Good Samaritan Problem

In our conversation, Albana asked me directly about the urgency leaders are feeling around AI. It brought to mind a piece of research – the Good Samaritan study – that I think explains more about AI adoption than most leadership frameworks.

In the study, participants were told they had an important interview to get to. One group was told they had five minutes. Another was told they had all the time in the world. On the journey, both groups encountered someone who had collapsed in the street.

Both groups had previously been asked about their values. Both had said they believed in compassion, care, looking out for others. When told they were in a rush, they walked past the person in the street. When told they had time, they stopped.

We are rushing towards somewhere we have not clearly defined. And in that rush, we are willing to bypass our own values, our common sense, and our thoughtfulness to get there. The question every board needs to ask is: what are we rushing towards?

This is not an argument for slowing down AI adoption. It is an argument for slowing down the decision-making. Taking a breath before you automate something. Asking whether the speed at which you are moving is strategic – or fear-driven.

Is This a Governance Problem or a Leadership Problem?

Both. And they are connected in a way that most organisations have not yet addressed.

The governance failure looks like this: no board-level accountability for AI decisions. No audit process for algorithmic impact. No agreed framework for which decisions require human oversight and which can be safely automated. Governance is being asked to catch up with adoption that has already happened.

The leadership failure looks like this: senior leaders are avoiding the conversation because they do not feel expert enough to lead it. They confuse AI literacy – knowing what the tools do – with AI-ready leadership, which is about judgement, values and accountability. These are not the same thing.

What is the difference between AI literacy and AI-ready leadership?

AI literacy means understanding what AI tools can do – prompts, use cases, platforms. AI-ready leadership means having the judgement to decide what AI should and should not be used for, who is accountable when things go wrong, and how to build the governance infrastructure to make those decisions well. You do not need to understand the technology to lead it. You need to understand its impact.

The question I find most clarifying for any board: what decision is AI currently making in your organisation that this board has never explicitly approved?

In most rooms, that question produces silence. Which is itself the answer.

What Leaders Should Never Use AI For – And How to Make That Call

One of the counterintuitive points I make consistently is this: a leader’s most important AI decision is not what to use AI for. It is what to never use it for.

Most organisations are so focused on adoption – what can we deploy, what efficiencies can we find, what can we automate – that they have skipped a more fundamental question. What should remain irreducibly human?

The Root Principle – Staying Human on Purpose

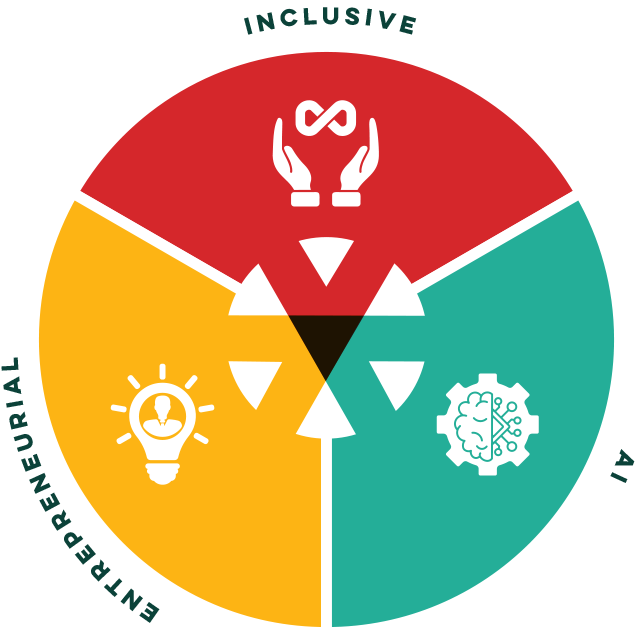

In the HEARt of AI™ framework I developed for AI-ready leadership, the R stands for Root. It means staying deliberately rooted in what is human – not as sentiment, but as strategy.

The decisions you should never automate are the ones where the relationship is the point, not just the outcome. Where the human presence is not incidental to the process – it is the process.

Performance conversations. Redundancy decisions. Safeguarding. Bereavement support. These are not inefficiencies waiting to be optimised. They are the moments that define what kind of organisation you are. Automating them does not make them more efficient. It makes them meaningless.

AI can give you advice and knowledge. It cannot sit in a room with a person and unlock their real potential. Even when an AI expresses empathy, it is not real empathy. It is what it has been trained to say.

How to Make the Call

There are two questions I return to consistently when helping leaders think through this.

The first: if this decision goes wrong, could I explain to the person affected why a machine made it? If the answer is no – if the explanation would feel inadequate, unjust or inhumane – that is your line.

The second: does this process require contextual judgement that comes from lived experience? AI has data. It does not have wisdom. The gap between those two things is exactly where the most consequential decisions live.

What decisions should leaders never automate with AI?

Decisions where the human relationship is the point, not just the outcome. These include performance conversations, redundancy decisions, safeguarding, bereavement support, and any decision where the affected person could not reasonably accept a machine as the decision-maker. The test: could you explain to the person affected, in a way that felt just, why AI made this call? If not, keep the human in the loop.

The EQUITAS framework – which runs through my forthcoming book AI for Equity – gives a third lens. The U stands for: Understand what you are automating. Before you automate any process, ask who designed it, and whose interests it was designed to serve. If you automate an inequitable process at scale, you do not solve the inequity. You industrialise it.

The IKEA Reframe – Creating Value Rather Than Cutting Cost

Most organisations approach AI through a cost reduction lens. How many roles can we remove? How much headcount can we save?

IKEA offers a different model. They introduced AI into their customer service call centres, which displaced call centre staff. Rather than making those people redundant, they looked at where growing customer demand existed across the business. Interior design services was one answer. They redeployed call centre workers into interior design roles, trained with the support of AI. The call centre still operates. A new revenue stream now exists. And people whose roles were displaced have been given new ones.

That is what AI-enabled leadership looks like. Not how do we save money on people. How do we create more value with people and AI together?

It requires a different question at the board level. Not what can AI replace? But what can people do better, with AI alongside them?

Why Equity Belongs on the AI Agenda – Not Just the Ethics One

When Albana asked me this question, I felt it land differently than I expected. It sharpened something I have been trying to articulate for years.

Ethics is a conversation about values. Equity is a conversation about risk, liability and long-term institutional sustainability. And right now, most boards are having the first conversation and calling it the second.

For board directors and governance professionals, this distinction matters enormously. Equity is not a moral add-on to the AI agenda. It is a governance imperative with legal, reputational and commercial consequences.

The EU AI Act – now in active enforcement from 2025 – classifies high-risk AI systems including those used in employment, recruitment and workforce management. UK employment law is evolving in parallel. This is no longer a nice-to-have. It is an audit and compliance matter.

Source: EU AI Act official text and implementation timeline — https://artificialintelligenceact.eu

Source: UK Government AI Regulation Policy Paper — https://www.gov.uk/government/publications/ai-regulation-a-pro-innovation-approach

The Data Problem

If your AI systems are trained on historical data, they are encoding historical inequity. This is not a theoretical concern. It is already happening, often invisibly, in recruitment, progression, performance assessment and resource allocation.

A concrete example. An organisation wanted to recruit nurses. They used Google Ads to target potential applicants, specifying they wanted to reach candidates of any gender. Because historical data associated nursing predominantly with women, the algorithm directed the advertising almost exclusively at women. The organisation’s stated intent was neutrality. The outcome was a perpetuation of existing patterns.

This is what happens when you accelerate at scale without examining the assumptions built into your systems. You do not solve bias. You amplify it.

Why does equity belong on the board AI agenda and not just the ethics agenda?

Because equity is a risk question, not just a values question. AI systems trained on historical data encode historical inequity – in hiring, progression, pay and access. The EU AI Act and evolving UK employment law are making this an audit and compliance matter. Organisations that fail to address equity in their AI systems face legal exposure, reputational risk, and the long-term business cost of a less diverse, less representative workforce.

The Two-Tier Workforce

There is a second equity dimension that does not get enough board-level attention: who has access to AI capability inside the organisation.

According to the research, the more senior you are, the more likely you are to have received AI training. Frontline workers, deskless workers, and less senior staff are being left to figure it out themselves. They are being asked to adapt to a technology they have not been given the tools to understand.

Source: IBM Institute for Business Value – The AI Talent Gap 2024 — https://www.ibm.com/thought-leadership/institute-business-value/en-us/report/ai-talent

At the pace AI amplifies capability, this gap widens every week. The organisations investing in AI literacy at the top while leaving the rest of the workforce to fend for themselves are not just creating an internal equity problem. They are creating a strategic risk.

Because many of the roles being automated first – administrative, part-time, supporting service roles – are disproportionately held by women. If those roles disappear before the pipeline of women coming through the organisation has had a chance to build, you will have a less diverse leadership cohort in five years. And a less representative organisation makes worse decisions about the customers and communities it serves.

The 2034 Convergence

Albana raised a statistic in our conversation that I think every board should sit with.

At the current pace, women will reach boardroom parity by 2034. That is the same year AI is projected to automate the vast majority of corporate decisions. Which means most organisations will have deployed advanced AI governance systems while still being governed primarily by men.

If we go at the pace we are currently setting, we will have institutionalised bias at machine speed before the governance reform catches up.

This is not a prediction about failure. It is a warning about the cost of moving fast without looking at who is in the room when the decisions are made – and who is not.

What Leaders Navigating This Well Have in Common

I work with leaders across sectors, organisational sizes, and countries. The ones navigating AI adoption well are not always the most technically advanced. They share something different.

They Have Separated What AI Can Do From What They Will Use It For

AI could theoretically do almost everything, if you let it. The leaders getting this right have made a deliberate choice to decide what they want to use it for – and equally, what they do not. That is a values conversation, not a technology one. And they are having it at the most senior level.

They Have Built Psychological Safety Around AI

In organisations where AI is handled well, people can say I do not understand this without career penalty. That matters because when there is fear and no safety, nobody challenges bad decisions. Nobody says the obvious thing. Nobody flags what is not working. Leaders who create space for honest questions get better information – and make better decisions.

They Have Moved From Piloting to Governance

There is a significant difference between running AI pilots and governing AI adoption. Most organisations are still in the pilot phase – experimenting, testing, scaling. The ones ahead of the curve are building the decision-making infrastructure. They have an agreed process for evaluating whether a new AI deployment goes ahead, who is accountable if it does not work, and how they measure impact beyond productivity metrics.

They Ask Who Is Not in the Room

The most consistent behaviour I see in leaders navigating this well is that they ask a specific question before major AI decisions: who is affected by this that is not in the room right now? And then they change the room.

The rushed decisions that have had to be reversed – the ones we read about every few months – were almost universally made without the right people at the table. The frontline workers. The affected communities. The people whose roles or data or opportunities were most at stake.

What do AI-ready leaders do differently?

AI-ready leaders separate what AI can do from what they choose to use it for. They invest in psychological safety so people can question AI decisions without fear. They have moved from piloting AI to governing it – with clear accountability structures and decision-making frameworks. And they consistently ask who is most affected by an AI decision that is not currently in the room – and then change the room.

The One Question Every Board Should Ask

Albana asked me to leave this audience with a single question. I always find it hard to give just one. But the one I kept returning to throughout our conversation was this:

Are the people most affected by AI in your organisation in the room when the decisions about it are being made?

This question works because it is specific and answerable. In most organisations, the answer is no. And that no is your starting point.

Albana offered a companion framing that I think is equally powerful: who is not in the room – and what is the cost of their absence?

That second question moves from audit to consequence. It is not just about who is missing. It is about what you are getting wrong because they are not there.

What is the most important question a board can ask about AI?

Are the people most affected by AI in your organisation in the room when decisions about it are being made? This question surfaces whether decision-making is representative, whether AI is being built and deployed with or for the people it affects, and where accountability gaps exist. The companion question – who is not in the room and what is the cost of their absence – moves from audit to consequence.

AI Is Not a Technology Problem Waiting for a Technical Solution

At the close of our conversation, Albana offered the most precise summary I have heard of what this work is actually about.

AI is not a technology problem waiting for a technical solution. It is a leadership question – for leaders who are willing to ask harder questions, hold clear lines, and make values-centred, equity-centred decisions.

That means resisting the race. It means slowing down the decision-making even as the technology speeds up. It means being the person in the room who asks what are we rushing towards – and insisting on a real answer before the next investment is approved.

The AI-ready leader is not the one with the most certifications or the most advanced AI stack. They are the one with the clearest values, the most honest questions, and the courage to govern something they do not yet fully understand.

AI-ready leadership is not about having the answers. It is about creating the conditions where the right questions get asked – and the right people are in the room to hear them.

Watch the Full Conversation

The full fireside chat – The AI-Ready Leader: What It Really Takes to Lead When AI Is Already Here – is available to watch in full. The conversation covers all of the themes explored in this article, including the equity dimension, the governance gap, and the specific questions every board should be asking right now.

About Jenny Garrett OBE

Jenny Garrett OBE is a leadership expert, executive coach and founder of Jenny Garrett Global, a boutique leadership development consultancy in its 20th year. She works with boards and executive teams across the NHS, UK Parliament, financial services and beyond, at the intersection of inclusive leadership, AI-ready leadership, and organisational equity.

Jenny is an MIT AI Strategy and Leadership Programme alumna, and the co-author of AI for Equity: Creating a More Equitable Society for All (Emerald Publishing, September 2026), co-written with her daughter Leah-Sunshine Garrett. She co-hosts the AI for Equity podcast with Leah.

She developed the HEARt of AI™ framework for AI-ready leadership and the EQUITAS™ framework for equitable AI governance.

Explore Jenny’s work:

→ AI-Ready Leadership programmes and resources

→ Developing an AI Mindset – article for HR Leaders

→ AI for Equity podcast (co-hosted with Leah-Sunshine Garrett)